Keyword Ranking Vs. AI Search Citation Rate

Last week, I ran a quick experiment. I pulled the top 20 traffic-driving pages from a client's blog. Every single one ranked on page one for its target keyword, had solid click-through rates, and good engagement metrics. By every traditional SEO measure, these pages were performing well.

Then I tested how often those same pages were cited in AI-generated answers across ChatGPT, Perplexity, and Google's AI Overviews.

The results were not great.

A handful showed up occasionally, but most didn't appear at all. A few of the highest-traffic pages were completely invisible inside the realm of AI search, even when the query matched the page almost perfectly. This is the gap most content teams don't know they have, and it's getting more expensive to ignore.

Why should you care about showing up in AI search results?

First of all, the elephant in the room: Yes, it’s still *early* to be thinking about AI search. I get that. However, the early bird advantage is very real, and the content teams working to solve for this now are getting ahead of the game.

Here’s what the data says about AI search:

It’s becoming the go-to method: More than 1 in 3 consumers (37%) now begin their searches with AI tools rather than traditional search engines (via Search Engine Land.)

It’s valuable: AI search traffic converts at 14.2% compared to Google's 2.8%, making it approximately 5x more valuable per session (via Exposure Ninja.)

Marketers are moving on this: 54% of US marketers plan to implement GEO work within 3-6 months (via Superlines.)

The other thing I’ll say is that AI citation rate isn't just a vanity metric. It's a distribution channel. When ChatGPT or Perplexity cites your content, it pulls users directly into your perspective, your framing, and your recommendations around the topic you’re working to own.

Does ranking well for keyword phrases still matter? Yes! It’s still part of the puzzle around AI search…but it’s not the whole game.

The question is: How do you close the gap between showing up on page one of Google search results and getting cited by LLMs?

How to close the gap between ranking and AI search citation

Pages with real authority and years of SEO equity behind them are getting skipped over in AI-generated answers, while newer, more specific and insightful content from competitors gets cited instead.

LLMs care more about those content factors than whether or not it targets a keyword phrase.

The good news is that closing the gap between ranking well and getting cited doesn't require a full content overhaul. You just need to know exactly where you stand, which pages are closest to crossing over, and what's keeping them from getting cited.

Here’s how to do this assessment.

Part 1: The Visibility Gap Audit

Before you create anything new, you need to know where your existing content stands in AI search. Not where it ranks. Where it gets cited.

Step 1: Pull your top 20 pages.

Use whatever analytics tool you have and sort by organic traffic over the last 90 days. From there, grab the top 20 URLs and the primary keyword each page targets. These are your highest-leverage assets, which makes them the right place to start.

Step 2: Test each page against its target query in AI search.

Run each keyword as a natural-language question in ChatGPT, Perplexity, and Google AI Overviews. (Example: "best practices for B2B email onboarding" rather than the exact keyword string.)

Screenshot or log whether your domain appears in the response, whether it's cited as a source, and where in the answer it shows up.

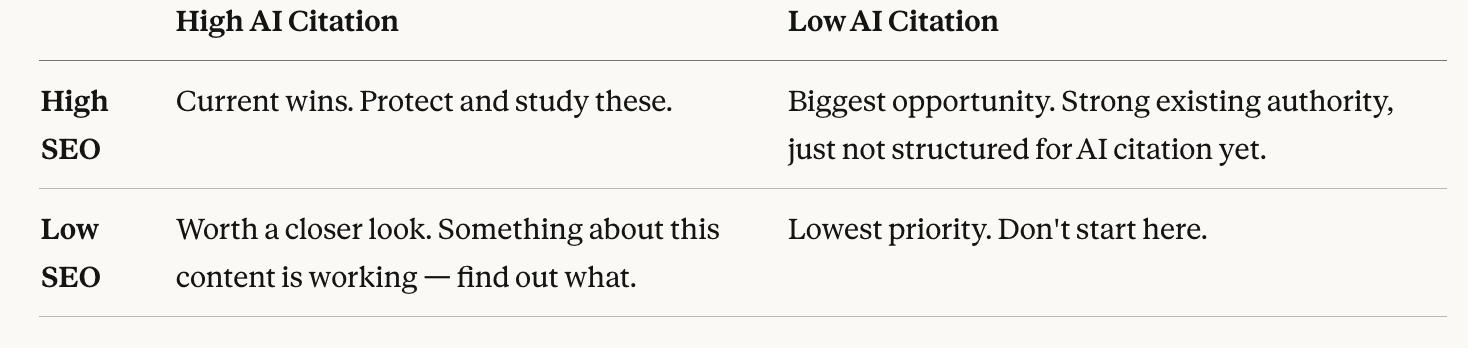

Step 3: Build a simple 2x2.

Plot each page against two axes: SEO performance (high/low) and AI citation rate (high/low). This gives you four categories:

The "High SEO / Low AI" bucket is where you'll spend most of your time. These pages already have topical authority and inbound links (the content just isn't structured in a way AI systems know how to use.)

Part 2: Competitive Citation Mapping

Once you know where your content stands, you need to see the full competitive landscape. The real opportunities aren't just in upgrading your own pages, but in the queries where competitors are getting cited, (and you're not.)

Step 1: Pick 15-20 high-intent queries in your space.

These should be questions your buyers actually ask: evaluation questions, comparison questions, and how-to questions tied to your product category. Not branded terms. The informational queries where AI is most likely to generate a synthesized answer.

Step 2: Run each query and log who gets cited.

For each query, note which domains appear as sources and how often. You're looking for patterns: Are the same 3-4 competitors showing up repeatedly? Are there queries where no one is getting cited consistently? Both are signals.

Step 3: Identify your priority gaps.

Two gap types matter most:

Competitive displacement gaps: Queries where a competitor is consistently cited and you're not. This means AI systems have already decided this is a citable topic. You just need to give them a better answer to pull from.

Open territory gaps: Queries where no one is getting cited reliably. These are either too new, too niche, or too poorly covered for AI to have a preferred source yet. First-mover advantage is real here.

Step 4: Audit the pages that ARE getting cited.

Go read them. Specifically look for:

Is there a direct answer in the first 1-2 sentences under each heading?

Is the content structured so a single paragraph can be extracted without losing context?

Does it include specific data, named frameworks, or an original perspective that AI can attribute to a source?

That's your upgrade checklist.

How to Upgrade a Page for AI Citation

You don't need to rewrite everything; you just need to make your content extractable by LLMs. Here's what Nick Lafferty, Head of Growth Marketing at Profound, says about it:

“It sounds simple, but check to make sure AI agents can access all of your pages. From there, ensure you have enough coverage of bottom funnel content where most purchase decisions happen. Do you have comparative content against your competitors? If not, Answer Engines will pull content from somewhere else to give an answer on why your product is better (or worse) than your competition.”

A few other specific changes that move the needle:

Add a direct answer in the first two sentences under each H2. AI systems pull from section openings. If the first sentence under a heading is a transition phrase or throat-clearing, you've already lost the citation. Lead with the answer.

Include at least one specific data point per section. Numbers, percentages, time frames. AI models prefer content they can attribute and verify. Generic statements get skipped. Specific claims get cited.

Add something only you can say. A named framework. A proprietary process. A stat from your own research or client work. This creates attribution pressure, which is when AI is more likely to name the source because the insight doesn't exist anywhere else.

Use clean, descriptive H2s that mirror how people ask questions. "How to audit your content for AI visibility" will get pulled more reliably than "Our Proven Content Audit Process."

The mindset shift for getting your content cited in AI search

You don't need to rebuild your entire content library, but you do need to know where the gaps are. A few targeted upgrades can shift visibility in a way that traditional SEO metrics would never reveal.

If you’re working to win more AI citations in 2026, be strategic and audit what you already have to make it citable.